The Centaurs

We’ve talked about the nine Gardnerian intelligences and how AI as a technology appears to have developed competence across many of them, including making it close to emotional intelligence (EQ). But “appears” is the pivot here because what large language models and affective computing systems actually do is produce an expected and predicted output without possessing the underlying cognitive architecture. This distinction is important because it draws the line between humans and machines and their relationship.

At the workplace the question nowadays is not whether AI is going to replace us all but rather the question of which roles are susceptible to AI automation and which will resist. The answer correlates with three areas in which humans retain a genuine and long-lasting advantage over the algorithms.

First, creativity in the sense of producing original and novel concepts. Like machines, humans can also recombine existing patterns, but unlike machines they can restructure the problem space and transform reality (Boden, 2004). Second, the capacity for complex social interaction and an understanding for the nuances of society and culture, which depends on embodied experiences, enculturation and somatic markers (Damasio, 1994), not merely linguistic processing. Third, the breadth and depth of human perception, which means context-sensitive construction of meaning (Clark, 2013). These three human capacities constitute the bottleneck to AI automation.

Nevertheless, some jobs will be lost and displaced in this ongoing technological revolution. Roles that consist of mundane intellectual work, routine and repetitive work, pattern recognition in structured data or information retrieval and synthesis will probably go or are already being displaced currently. But roles that correspond much closer to unique human capabilities and which demand creativity, social interaction and judgment are much harder to automate (Frey and Osborne, 2017).

What follows from this logic is that humans must not ignore AI, but that they must learn to use it and work with it. This is where centaurs come into play.

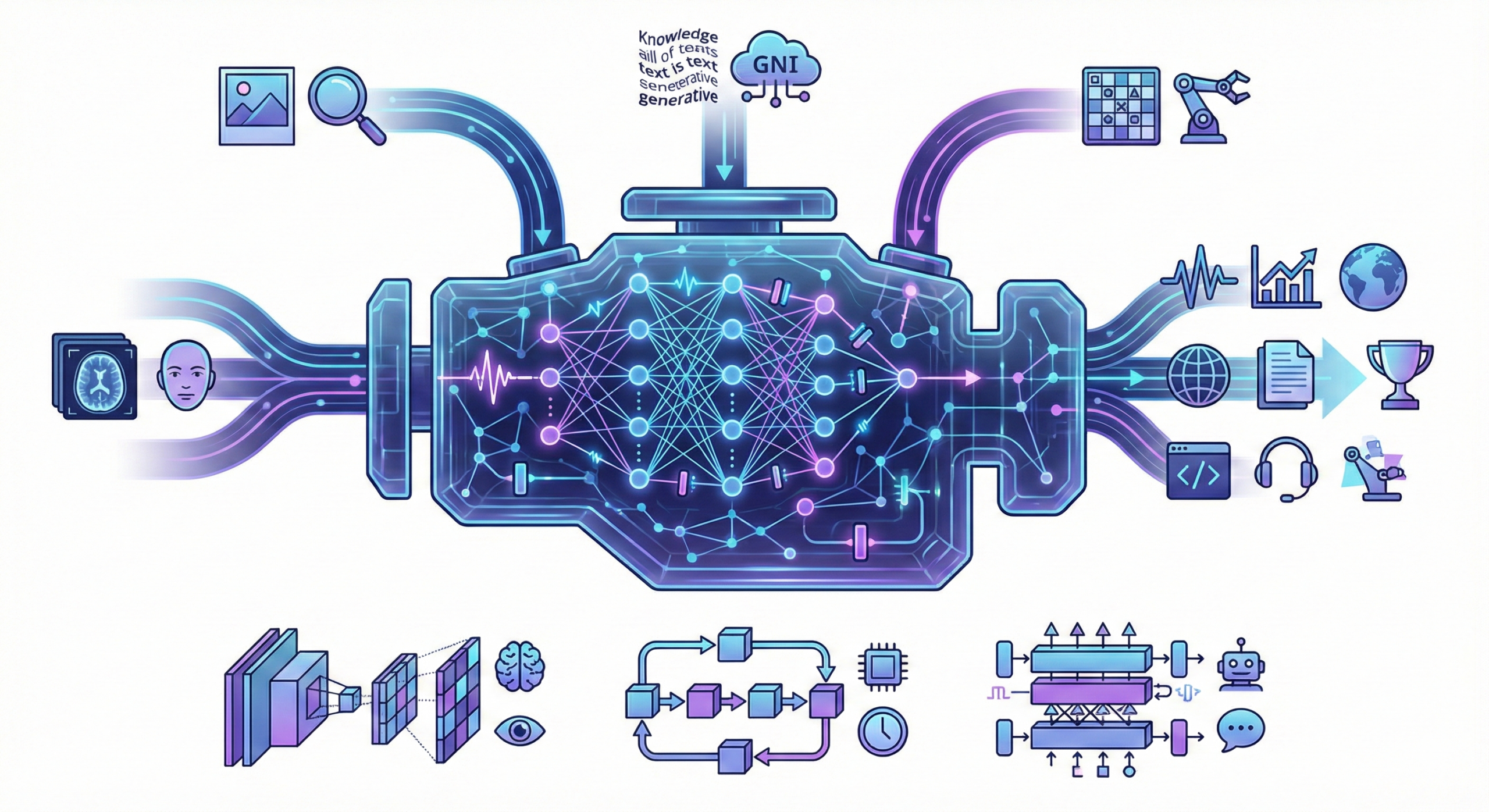

The centaur, from Greek mythology, was half-horse, half-man, a fusion of the two. The centaur worker of the future is not someone who delegates to AI and supervises the output. They are someone who integrates AI into their own cognitive workflow, using algorithmic speed and power to extend their human capacities. The fusion is the point. Neither half is dispensable.

Take psychology for example. A psychologist is not going to be replaced by AI. The relationship with a patient, the capacity to read between the lines, to interpret non-verbal communication, to adapt to a particular individual, these are precisely the social and perceptual capacities that cannot be automated.

But parts of what a psychologist does, literature review, assessment scoring, pattern identification in research data, statistical data analysis, progress tracking, these can benefit from AI augmentation. The psychologist who learns to use AI effectively will be more productive and better at their job. Psychologists who refuse to engage with the technology will find themselves outpaced, not by AI, but by their AI-augmented peers (Mollick and Mollick, 2023). The competitive displacement is human-to-human, not machine-to-human.

The threat is not that algorithms will do your job. The threat is that someone who does your job and understands how to leverage algorithms will do it significantly better than you.

However, the centaur model assumes a human half that is capable of meaningful contribution, meaning having basic knowledge of mathematics and culture, fundamentals of humanities, literacy and data analysis. Without these, the human is no longer directing the AI but is directed by it, unable to assess whether an output is sound, valid, relevant or appropriate. Such a situation is more dangerous than automation, because it carries the illusion of human oversight when in reality there is none and leads such individuals to abdicate thinking to the machines (Wiener, 1960).

Only those who invest in their future and in their human-specific capabilities will construct genuine human-AI collaborations. Centaurs. For everyone else, the risk of job displacement is not a future threat but a present one already.

Reference list:

Boden, M. A. (2004) The Creative Mind: Myths and Mechanisms. 2nd edn. London: Routledge. Available at: https://www.routledge.com/The-Creative-Mind-Myths-and-Mechanisms/Boden/p/book/9780415314534

Clark, A. (2013) ‘Whatever next? Predictive brains, situated agents, and the future of cognitive science’, Behavioral and Brain Sciences, 36(3), pp. 181-204. Available at: https://doi.org/10.1017/S0140525X12000477

Damasio, A. R. (1994) Descartes’ Error: Emotion, Reason, and the Human Brain. New York: G. P. Putnam’s Sons.

Frey, C. B. and Osborne, M. A. (2017) ‘The future of employment: How susceptible are jobs to computerisation?’, Technological Forecasting and Social Change, 114, pp. 254-280. Available at: https://doi.org/10.1016/j.techfore.2016.08.019

Mollick, E. and Mollick, L. (2023) ‘Navigating the Jagged Technological Frontier: Field Experimental Evidence of the Effects of AI on Knowledge Worker Productivity and Quality’, Working Paper. Available at: https://doi.org/10.2139/ssrn.4573321

Thompson, C. (2013) Smarter Than You Think: How Technology Is Changing Our Minds for the Better. New York: Penguin Press. Available at: https://www.penguin.co.uk/books/189765/smarter-than-you-think-by-thompson-clive/9780007427796

Wiener, N. (1960) ‘Some Moral and Technical Consequences of Automation’, Science, 131(3410), pp. 1355-1358. Available at: https://doi.org/10.1126/science.131.3410.1355